Scignabot

Currently there are no effective therapies to improve the outcome of motor disabilities after brain damage from different causes, especially in the early stages, and in its most serious forms, where specific rehabilitation treatments supported by evidence require the ability to produce some voluntary movement, although intensive training and non-invasive brain stimulation techniques (NIBS) report mixed results with some improvement in functional outcome. Brain-robot interfaces (BRI) are an emerging technology that can effectively increase cortical plasticity using Hebbian-like learning rules and can improve recovery from brain injury, but are barely used (even) in clinical settings.

The SCIGNABOT project is built on previous technological results developed in collaboration by the members of the consortium, financed by competitive projects by national and European plans (H2020). The main parts that will be key points in this project are:

PUPArm (Robotic therapy and rehabilitation games)

It is a planar pneumatic robot with 2 degrees of freedom. It has been designed for the neuromuscular rehabilitation of the upper part of the arms. PUPArm is part of a complete rehabilitation system that includes biofeedback, visual interfaces and virtual rehabilitation. Rehabilitation games

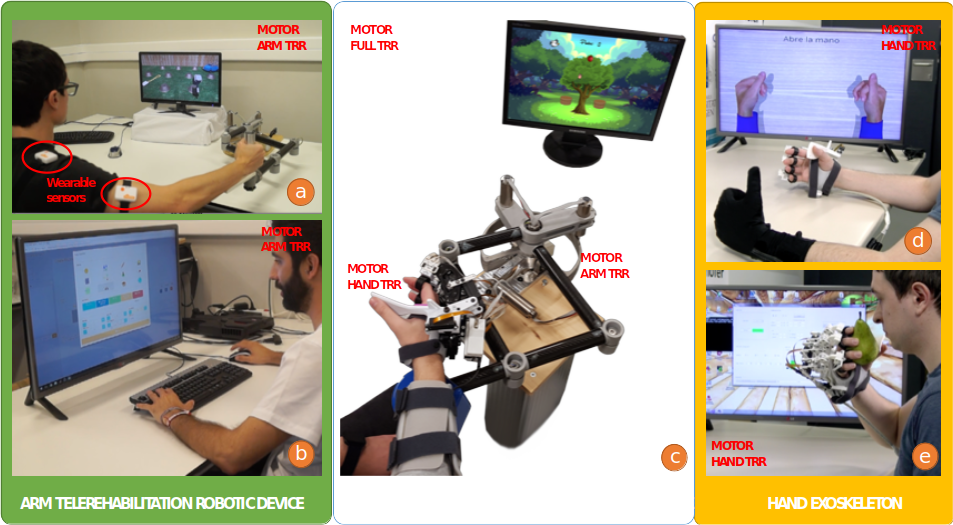

HOMEREHAB Project (Low Cost Exoskeleton)

a new robotic tele-rehabilitation system to provide therapy to patients with stroke at home; The complex compromise between robotic design requirements for home systems and the performance required for optimal rehabilitation therapies, which current commercial systems designed for laboratories and hospitals do not take into account, will be investigated. In addition, the new home setting also requires intelligent monitoring of the patient’s physiological status and adaptation of rehabilitation therapy for optimal service.

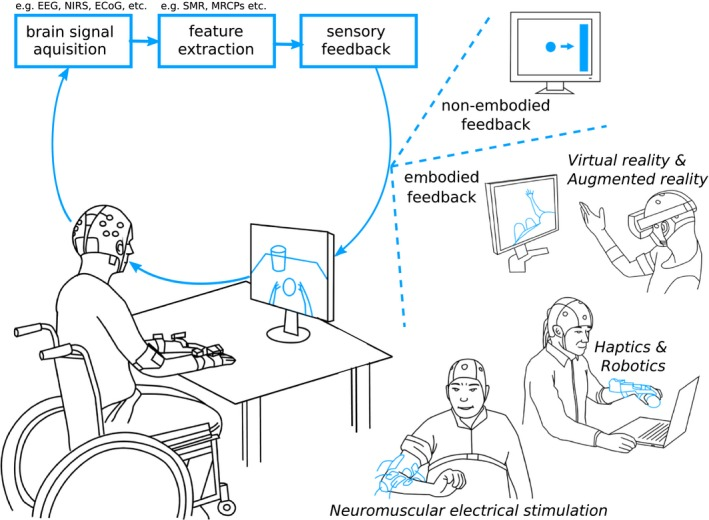

Project AIDE (Functional integration of the Brain computer interface)

a new modular system of multimodal perception to customize an adaptive multimodal interface for the needs of people with disabilities. The multimodal interface analyzes and extracts relevant information from the identification of residual abilities, behaviors, emotional state and intentions of the user, from the analysis of the environment and context factors.